I was on a Zoom call recently with the CISO of a large, multibillion-dollar enterprise.

We were discussing the bottlenecks in his organization.

It wasn’t the budget.

It wasn’t a lack of talent.

It was the “Business As Usual” trap.

He told me that his leadership team was overwhelmed by operations. They were spending all their energy keeping the lights on. But the real problem surfaced whenever he tried to push them toward AI innovation.

Their excuse was always the same: “We can’t start yet. Our data isn’t clean enough.”

That’s when the CISO shared a perspective that every leader needs to hear.

He told me about the F-16 fighter jet. That plane flies at supersonic speeds. Relying on sensors that are constantly dealing with noise, interference, and imperfect data. It doesn’t wait for perfect conditions. It adjusts and executes.

He connected it with his situation.

“If a fighter jet, something that deals with life risks, can operate with imperfect data... Why are we stopping our business innovation because of imperfect spreadsheets?”

He is right.

The Insight

The CISO was right about the fighter jet. But the problem in most organizations runs deeper than just bad habits.

If you look at why your team is stalling, you’ll find 3 distinct traps disguised as “strategy.”

To move forward, dismantle them one-by-one.

1. The Psychological Trap

In many boardrooms, “Waiting for data quality” is a sophisticated way of saying “I am afraid.”

For a middle manager, the safest place to be is in the “Preparation Phase.” As long as they are “cleaning the data” or “auditing the warehouse,” they cannot fail.

They are busy.

They are spending their budgets.

But they are not exposed to risk.

The moment they launch a live model, they will get exposed. What if the AI hallucinates? What if it gives a wrong answer to a client?

So, they use Data Governance as a shield. They set a standard for perfection that is impossible to reach to never have to face the risk of launching. It looks like prudence, but it is actually paralysis.

2. The Technical Trap

For the last 20 years, we taught our teams that software is fragile. We raised a generation of managers on Excel and SQL.

In that world, Deterministic Logic rules.

If you format one cell in your spreadsheet as text instead of a number, the entire financial model breaks. If your SQL query has one typo, the database returns an error. Perfection wasn’t a luxury; it was a need for the system to function at all.

But AI operates on Probabilistic Logic.

Modern LLMs and Machine Learning systems are designed to be resilient. Engineers build them to function like a human brain. They look at the context, ignore the noise, and find the signal.

When you demand 100% clean data before starting an AI pilot, you are applying the rules of 90s in 2026. You are trying to sterilize the environment for a tool that was built to fight in the mud.

3. The ROI Trap

The cost of waiting for perfect data is almost always higher than the cost of fixing errors later.

Imagine two companies.

Company A spends 12 months scrubbing their data warehouse. They haven’t run a single model yet.

Company B launches a pilot today with “dirty” data. Their model is only 70% accurate.

In a year, when Company A launches, Company B will have spent 12 months gathering user feedback. They will have retrained their model on edge cases and improved their prompts.

Company B’s model will now be 95% accurate and fully integrated into their workflow. On the other hand, Company A will have just a perfect database with a dumb model. With zero real-world experience.

Data cleaning is not a gate you must pass through to enter the world of AI. It is a continuous process that happens after you launch.

The Process

So, how do you break the paralysis?

You stop treating AI implementation like a construction project (linear). And start treating it like a startup (iterative).

We call this the “Launch & Clean” Protocol.

Here is the 3-step map to moving from “Analysis Paralysis” to “Actionable Intelligence” in the next 30 days.

1: Define Your “Minimum Viable Data” (MVD)

Stop asking, “Is our data perfect?” Start asking, “Is our data useful?”

In the startup world, we have the MVP (Minimum Viable Product). In corporate AI, you need the MVD.

Identify the specific dataset needed for one narrow use case. E.g., “Customer Support Logs from 2024” or “Q3 Logistics Manifests”.

Aim for 70% quality. If the data is readable by a human, it is likely readable by an LLM. Do not spend a budget cleaning historical data that might not even be relevant to the model’s output.

2: Sandbox the “Dirty” Pilot

Before you clean a single row, feed your “dirty” MVD into a secure, sandboxed AI environment. Run the model. Ask it questions. Generate predictions.

You will likely find that the AI ignores the formatting errors that would have broken a SQL query.

Measure the Utility, not the Cleanliness. Did the AI give a helpful answer despite the bad data? If the answer is “Yes,” you just saved yourself six months of data engineering work.

3: Bring a Human in the Loop

This is the most critical shift. Instead of cleaning everything before you start, only clean the data points that cause the AI to fail.

The Workflow:

The AI outputs a result.

A human expert (your “Pilot”) reviews it.

If the AI makes a mistake because of bad data, you clean that specific record.

Feed the correction back into the system.

This turns your AI pilot into a data-cleaning engine.

The model identifies the gaps for you.

You only spend the budget fixing what actually matters.

Case Study

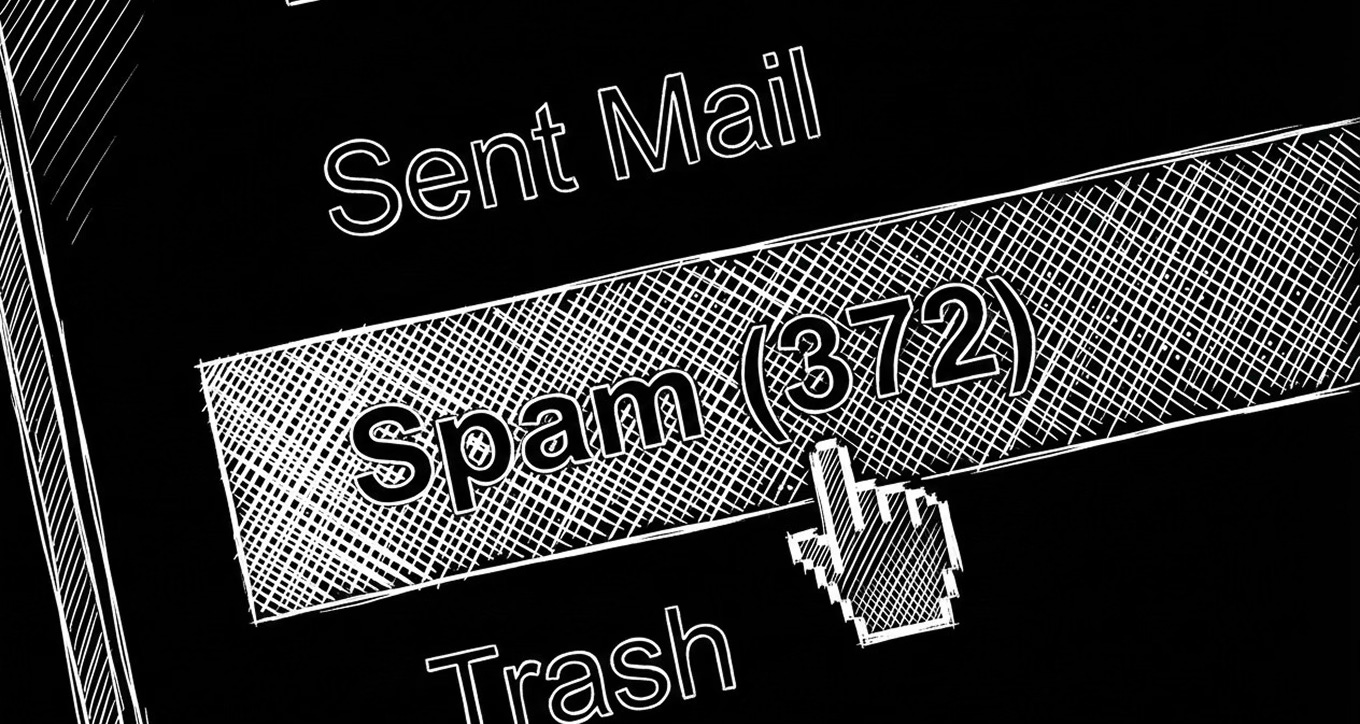

Look at one of the most successful AI implementations in history: Gmail’s spam detection.

In the early days, email providers launched spam filters with very imperfect data. The system had to deal with:

Mislabeled emails

Inconsistent formats

Spam tactics that changed daily.

The models were far from perfect—but they were good enough to block the obvious junk. But the magic happened after the launch.

Every time a user clicked “Spam” or “Not Spam,” they weren’t just organizing their inbox... they were labeling data for Google.

Engineers didn’t waste years manually cleaning decades of email logs before launching. They let the model surface mistakes in the wild. They corrected only those specific errors through user feedback and retrained.

The Result? Noisy data + Human-in-the-Loop feedback = The highly accurate filters we rely on today.

If Google had waited for a perfectly labeled, perfectly clean dataset before launching, we’d all still be manually deleting junk mail today. Instead, they shipped an imperfect model and cleaned it later.

What Now?

If you’re really down to harnessing AI and taking the driving seat of your future. Here are the things you’ll need.

1. Access to rich AI education.

Which, unfortunately, is extremely rare. Most of the internet is filled more with AI sensationalism than any deep education.

You can subscribe to this newsletter for such in-depth AI education for free.

If you are a leader of some enterprise, I recommend you join our exclusive WhatsApp channel that I have. (only qualified participants are allowed).

2. Roadmap and Guidance

There are many roadmaps to becoming an expert in AI.

95% of them are for data scientists and programmers.

4.9% for freelancers and entrepreneurs.

Hardly any are for corporate professionals.

3. Real 1:1 Training

If you’re a L&D Manager, HR Director, CHRO or a CEO, hire me to train or inspire your employees.

Thank you for reading this letter.

See you in the boardroom.